Intro: Why Energy Efficient Data Centers Design Is Your 2026 Survival Strategy

Picture this: your data center’s power bill just jumped 25% because AI workloads doubled rack density to 50kW+. Meanwhile, regulators are eyeing carbon taxes, customers demand green credentials, and investors want PUE dashboards in quarterly reports. Welcome to energy efficient data centers design in 2026, where efficiency isn’t optional, it’s the baseline.

Global data center electricity consumption reached 415 TWh in 2024, about 1.5% of total worldwide electricity demand, and that number is on track to nearly double to 945 TWh by 2030. In 2026 alone, estimates point to over 500 TWh consumed globally, roughly 2% of all electricity produced on Earth. US alone? 183 TWh/year, more than California’s total usage. These are not abstract figures. They represent real grid pressure, higher operating costs, and growing scrutiny from regulators, investors, and enterprise customers alike. And, AI’s the culprit: GPU clusters guzzle power like there’s no tomorrow!

Hyperscale data centers have doubled their energy consumption over the past few years, and as stated earlier, US data centers now consume 183 TWh annually, more than 4% of the nation’s total electricity use. AI training workloads, GPU-dense clusters, and always-on inference pipelines are pushing power densities far beyond what traditional facility designs were ever built to handle.

But here’s the upside: data center energy efficiency strategies from proven operators can cut your TCO by 30%+ while boosting performance. Think PUE dropping from 1.58 (global average) to 1.1, renewable PPAs locking in cheap green power, and AI optimizing cooling in real time. An effective strategy with the right partner can lead you to build sustainable data center solutions and adhere to practices like green data center design.

Understanding PUE: The North Star of Data Center Energy Efficiency

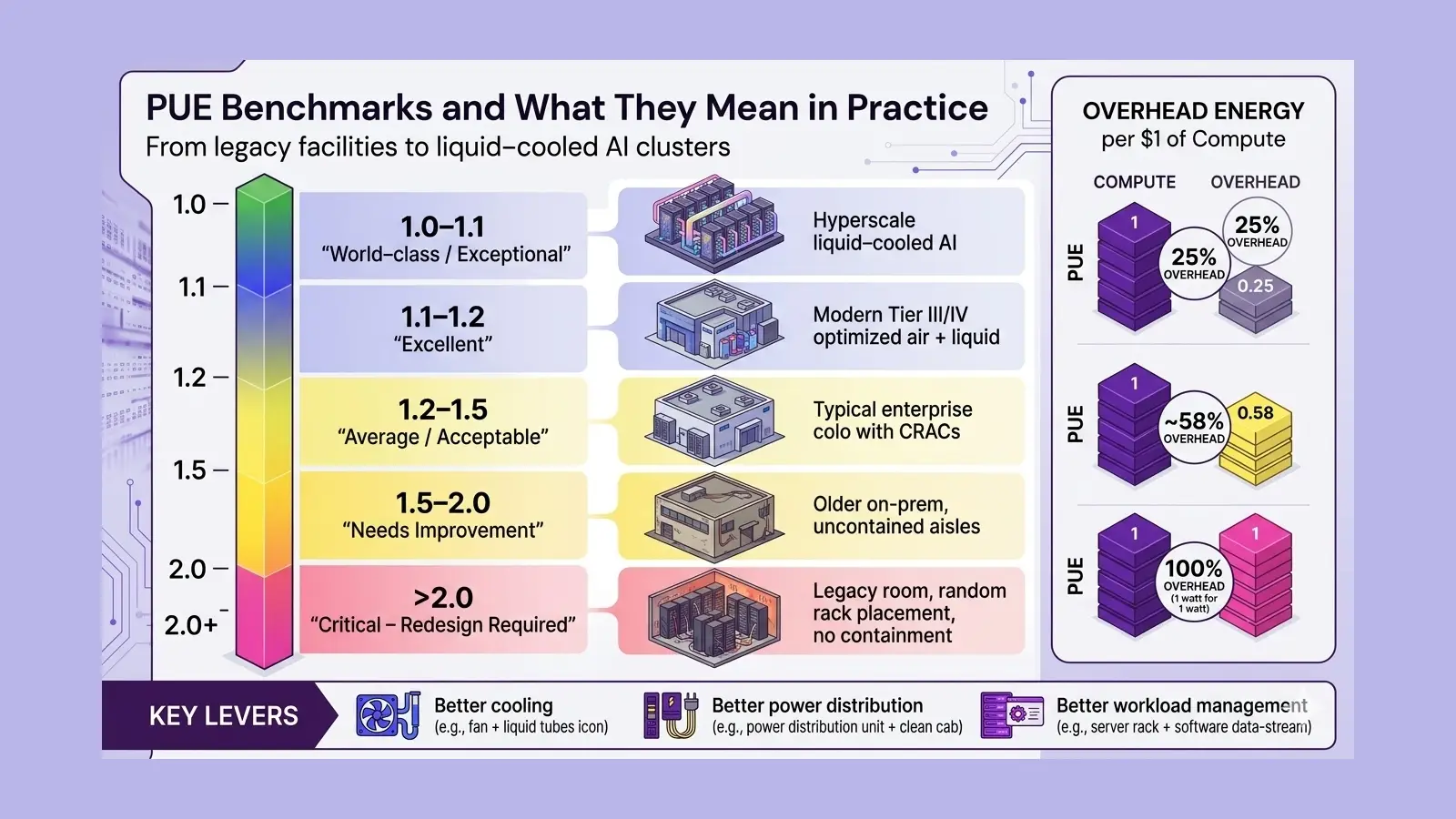

Before you can optimize a data center, you need to measure it. Power Usage Effectiveness (PUE) is the universal benchmark the industry uses. The formula is straightforward:

PUE = Total Facility Energy ÷ IT Equipment Energy

A PUE of 1.0 would be a perfect score, every watt goes to running IT equipment, with zero overhead. In reality, that is physically impossible. The global average PUE hovers around 1.58, but leading Hyperscalers routinely push below 1.2, and best-in-class liquid-cooled facilities are hitting PUE scores as low as 1.04. For context, a facility with a PUE of 1.25 wastes 25 cents of overhead energy for every dollar spent on compute.

What counts as a good PUE? Here is a practical reference:

| PUE Score | Efficiency Rating | Typical Facility Type | Operators ex. |

|---|---|---|---|

| 1.0-1.1 | World-class / Exceptional | Hyperscale, liquid-cooled AI clusters | Google, Meta |

| 1.1-1.2 | Excellent | Modern Tier III/IV, optimized cooling | Microsoft Azure |

| 1.2-1.5 | Average – Acceptable | Most enterprise colocation facilities | Most colos/colocation data centers |

| 1.5-2.0 | Needs Improvement | Aging or unoptimized data centers | Older sites |

| >2.0 | Critical – Redesign Required | Legacy facilities, poor airflow design | Urgent upgrade |

Pro tip: Track PUE monthly via DCIM tools. Small tweaks (blanking panels, fan curves) yield 5-10% gains fast. A key part of your data center energy efficiency strategies stack.

While benchmarks are useful, achieving sub-1.2 PUE consistently requires coordinated optimization across cooling, power, and workload layers.

Aptly Technology helps enterprises implement PUE optimization strategies using DCIM insights, infrastructure tuning, and AI-driven workload placement to move from average (1.5+) to best-in-class efficiency and enable energy efficient data centers design.

Start With Site Selection: Geography Drives Efficiency

The most overlooked lever in energy efficient data center design is site selection. Where you build determines ambient temperature, cooling costs, water availability, and access to renewable energy, all before a single server is racked.

Cooler climates dramatically reduce mechanical cooling requirements. That is why Hyperscalers have long favored locations like Scandinavia, Iceland, and the Pacific Northwest. Facebook’s data center in Luleå, Sweden, for instance, uses outside air for cooling for the majority of the year, driving its PUE below 1.1 consistently.

How to do energy efficient data centers design starts with location. Wrong site = 20% higher cooling costs forever. Right site = free cooling 80% of the year.

Key Site Selection Criteria:

- Ambient temperature: Cooler climates cut cooling energy year-round — look for annual average temperatures below 15°C where feasible.

- Renewable energy access: Proximity to wind, solar, or hydro capacity enables Power Purchase Agreements (PPAs) at scale.

- Water availability: Evaporative cooling systems require significant water — assess local scarcity and regulations upfront.

- Grid reliability: High-uptime grids reduce the need for diesel backup generators, which carry both cost and carbon penalties.

- Natural cooling potential: Sites with natural airflow corridors or access to cold groundwater can leverage free cooling cycles effectively.

Cooling Solutions for Data Centers: Choosing the Right System

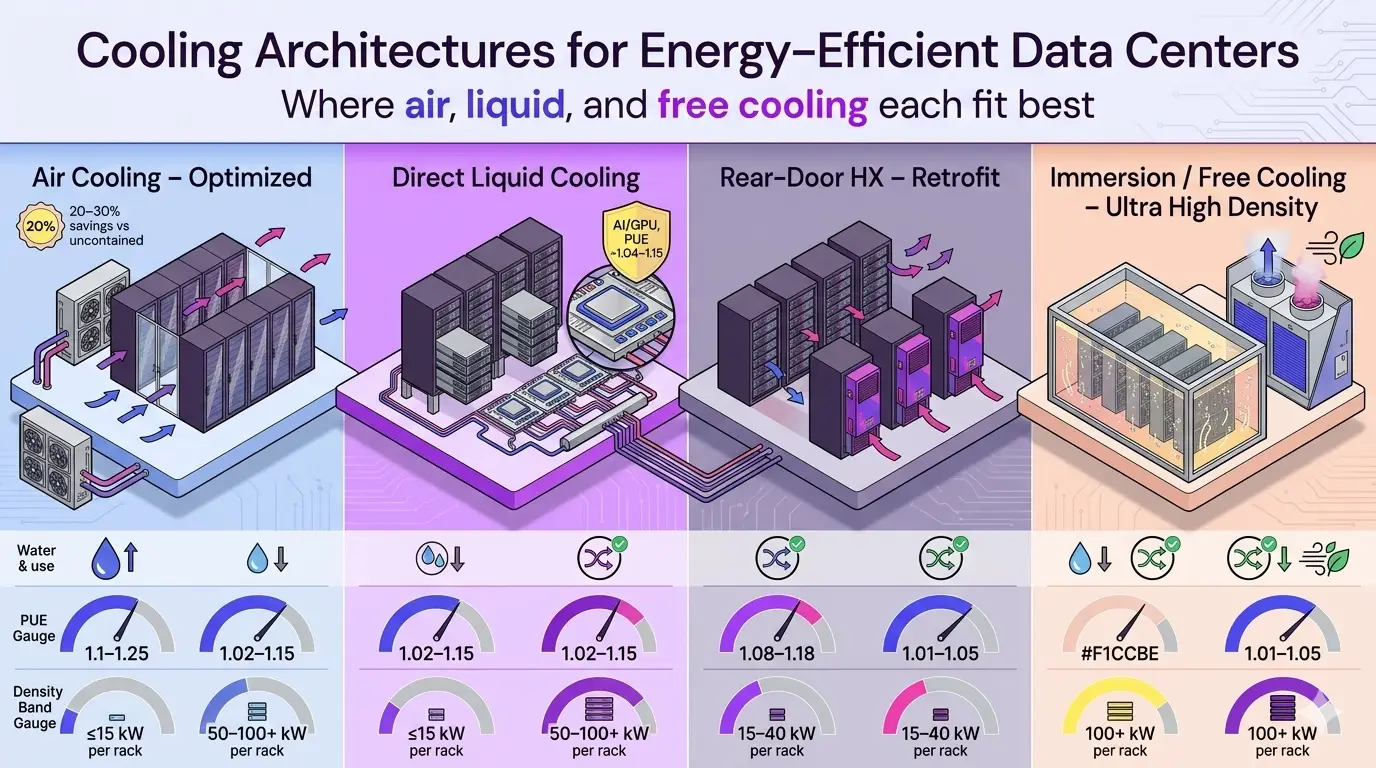

Cooling solutions for data centers are make-or-break. Air? Fine for 5kW racks. Liquid? Mandatory for AI’s 50kW+ monsters.

Traditional air-cooled facilities use 38% of their total energy just on cooling, and that figure rises as rack densities climb. With modern GPU servers running at 10–30 kW per rack (and AI clusters going far higher), air-based cooling alone simply cannot keep up.

Air Cooling: Still Viable, But Requires Optimization

For facilities running general-purpose workloads at lower densities, airflow optimization remains a highly cost-effective starting point. Hot-aisle/cold-aisle containment, where server intake (cold) and exhaust (hot) sides are separated physically, can reduce cooling energy by 20–30% with minimal capital investment. Blanking panels, raised-floor plenum sealing, and variable-speed fans add further savings on top.

Liquid Cooling: The Future is Already Here

Water is approximately 3,000 times more effective at heat removal than air. This makes liquid cooling the clear winner for high-density and AI workloads. Liquid-cooled facilities routinely achieve PUE ratings in the 1.04 to 1.1 range, cutting total power consumption by up to 40% versus air-cooled equivalents.

There are three main liquid cooling approaches, each suited to different scenarios:

| Cooling Type | How It Works | Best For | PUE Range |

|---|---|---|---|

| Direct Liquid Cooling (DLC) | Cold plates on CPUs/GPUs; liquid absorbs heat at source | AI/GPU clusters, HPC workloads | 1.04 – 1.15 |

| Rear-Door Heat Exchangers | Chilled water coils in rack doors absorb exhaust heat | Retrofit of existing air-cooled rows | 1.1 – 1.25 |

| Immersion Cooling | Servers submerged in dielectric fluid; fan-free operation | Ultra-high density, max efficiency | 1.02 – 1.10 |

| Evaporative / Free Cooling | Outside air or water tower used when ambient is cool enough | Cold climate facilities, Tier II/III | 1.05 – 1.20 |

Closed-loop liquid cooling systems also reduce water consumption by 30–50% compared to evaporative air cooling, which is increasingly important as facilities face scrutiny over their Water Usage Effectiveness (WUE) scores. Such systems can be implemented to effectively cool hyperscale data centers.

Transitioning from air to liquid cooling is not just a hardware upgrade—it requires redesigning rack density, thermal zoning, and workload distribution. Aptly Technology supports this transition with liquid-cooling-ready architectures, retrofit strategies, and workload-aware cooling optimization tailored for AI and GPU-heavy environments enabling effective energy efficient data centers design.

Data Center Power Management: Reducing Losses from Grid to Server

Power delivery is a hidden source of waste. Every component between the utility meter and the server’s CPU dissipates some energy as heat. Modernizing this chain produces meaningful PUE improvements without touching a single server.

High-Efficiency UPS and Power Distribution

Uninterruptible Power Supplies (UPS) operating in double-conversion mode typically run at 92–94% efficiency. Switching to Eco-mode or modular UPS architectures can push this to 98–99%, recovering 4–6% of facility-wide energy. Similarly, distributing power at higher voltage levels (480V over 120V in North America, for instance) cuts resistive losses in cables and transformers.

Server Virtualization and Workload Optimization

Physical servers sitting at 5–10% utilization still draw 50–60% of peak power. Server virtualization pools those idle cycles, often consolidating 8–10 physical machines onto a single well-utilized host. AI-driven workload scheduling tools take this further by dynamically placing workloads on the most power-efficient servers in real time, accounting for cooling zone temperatures, time-of-use electricity pricing, and thermal headroom.

Energy Monitoring Tools: You Cannot Manage What You Do Not Measure

Real-time energy monitoring tools at the PDU, rack, and server level are now table stakes for serious efficiency programs. DCIM (Data Center Infrastructure Management) platforms aggregate power, temperature, and utilization data into dashboards that enable both reactive alerting and proactive capacity planning. The best systems use machine learning to identify anomalies — like a cooling unit running 15% harder than expected — before they balloon into costly problems.

Renewable Energy Integration: Powering Green Data Center Design

Renewable energy integration is now the single biggest differentiator in low-carbon data center design and energy efficient data centers design. Google, Microsoft, and Amazon have all committed to matching 100% of their energy consumption with renewable purchases — and the strategies they use are increasingly accessible to mid-market operators too.

Power Purchase Agreements (PPAs)

A corporate PPA lets a data center operator contract directly with a wind or solar developer for a fixed electricity price over 10–20 years. This hedges against fossil fuel price volatility, provides a credible carbon offset narrative for customers, and in many markets is now cheaper than grid electricity. Google signed its first PPA in 2010; by 2024, it matched 100% of global electricity consumption through these agreements.

On-Site Generation and Storage

Rooftop or carport solar paired with battery storage enables partial self-generation and smooths demand peaks. For edge data centers in markets with unreliable grids, this also improves resilience. Fuel cells running on biogas are gaining traction in North America as a dispatchable, low-carbon backup alternative to diesel generators.

Strategic Load Shifting

Not all workloads are time-sensitive. Batch AI training jobs, data pipeline processing, and backup operations can be scheduled during hours when the grid carbon intensity is lowest, typically overnight when renewable generation is high. This approach, sometimes called carbon-aware computing, is being adopted by Microsoft and other Hyperscalers to reduce their operational carbon footprint without impacting service levels.

All these collectively lead to a sustainable green data center design builds and adhere to data center sustainability best practices.

Modular Data Centers: Building Efficiency in From Day One

Traditional data centers are sized for peak capacity — which means they run at partial load (and poor efficiency) for the first several years of their life. Modular data centers solve this by deploying capacity in discrete, factory-built pods that can be brought online as demand grows.

Each module is a self-contained unit with its own power delivery, cooling, and fire suppression. Because modules are pre-tested at the factory, build times shrink from 18–24 months to 6–9 months. More importantly, each module operates at or near full utilization from day one, keeping PUE low across the facility’s entire operational life — not just at theoretical maximum load.

Edge data centers take this concept further, deploying small modular units close to end users to reduce latency and network energy. Hyperscale data centers are increasingly adopting modular architectures to speed deployment while maintaining the operational efficiency advantages of centralized management leading to energy efficient server architecture.

Gaining Efficiency with AI Workloads Energy Optimization

AI workloads present a unique energy challenge. A single large language model training run can consume as much electricity as hundreds of transatlantic flights. But AI is also one of the most powerful tools available for managing energy consumption inside the data center itself.

Google’s DeepMind team demonstrated this vividly when its AI system reduced cooling energy at Google’s data centers by 40%, cutting overall energy consumption by 15%. The system analyzed thousands of sensor readings in real time and identified non-obvious relationships between cooling tower settings, server loads, and ambient weather conditions — optimizations no human operator could have discovered manually.

Practical AI Workloads Energy Optimization Strategies:

- Use mixed-precision training (FP16 or BF16 instead of FP32) to reduce GPU compute time and energy per training run.

- Implement model distillation to deploy smaller, equally capable inference models that run at a fraction of the energy cost.

- Schedule non-urgent batch workloads during off-peak hours when cooling is more efficient and grid carbon intensity is lower.

- Adopt GPU sharing and multi-tenancy frameworks (like NVIDIA MIG) to maximize utilization per GPU instead of spinning up underutilized dedicated instances.

- Monitor energy-per-inference as a KPI alongside latency and throughput to drive ongoing AI infrastructure and AI workloads energy optimization efforts.

Green Certifications and Sustainability Standards

Energy monitoring tools (DCIM) + data center sustainability best practices like ISO 50001, LEED get you green certified. Demonstrating sustainability credentials to enterprise customers and regulators increasingly requires formal certification. Here are the most relevant standards for data center operators in 2026 for ensuring energy efficient data centers design:

| Standard / Certification | Scope | Key Focus Areas |

|---|---|---|

| LEED (Leadership in Energy and Environmental Design) | Building and site | Energy use, water efficiency, materials, site selection |

| ISO 50001 | Energy management systems | Continual improvement in energy performance, monitoring, and targets |

| ENERGY STAR for Data Centers | IT equipment and facility | PUE benchmarking, equipment efficiency thresholds |

| EU Code of Conduct for Data Centers | Operational efficiency (Europe) | Best practice guidance, PUE reporting, renewable energy commitments |

| Carbon Neutral / Net Zero commitments | Operational + supply chain | Scope 1, 2, and 3 emissions measurement and offset strategies |

Beyond formal certification, many enterprise procurement teams now require data centers to publish real-time PUE and carbon intensity dashboards as a condition of tenancy. Transparency is fast becoming a commercial requirement, not just an ethical one.

How to Reduce Power Consumption in Data Centers: A Practical Framework

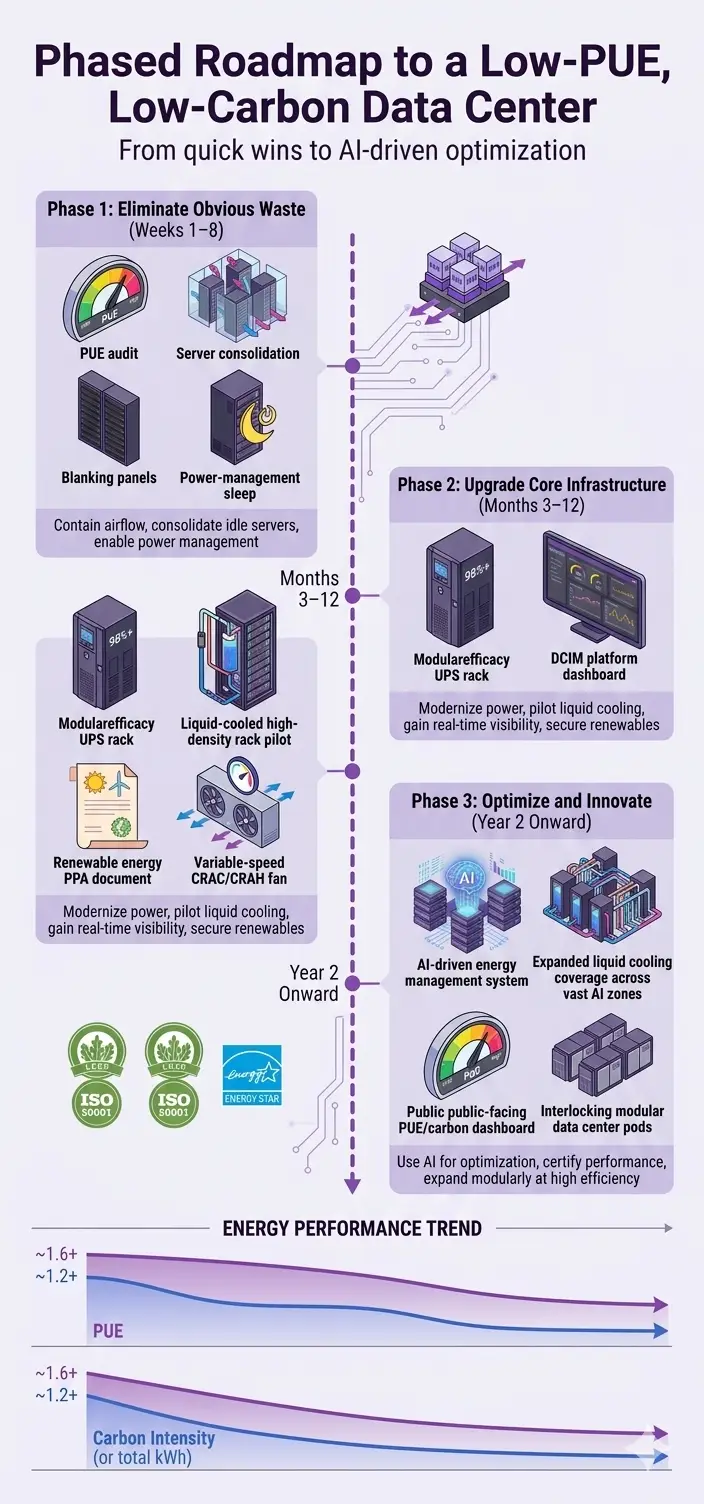

If you are starting from scratch or retrofitting an existing facility, here is a phased approach that prioritizes the highest-impact actions first:

Phase 1: Eliminate Obvious Waste (Weeks 1–8)

- Audit current PUE and identify the top three overhead contributors (cooling, UPS losses, lighting).

- Implement hot-aisle/cold-aisle containment if not already in place.

- Add blanking panels to all empty rack units to prevent hot-air recirculation.

- Consolidate underutilized servers through virtualization.

- Enable power management features (sleep states, dynamic voltage scaling) on all servers.

Phase 2: Upgrade Core Infrastructure (Months 3–12)

- Replace legacy UPS with high-efficiency modular units (targeting 98%+ efficiency).

- Evaluate and pilot liquid cooling for your highest-density racks.

- Deploy DCIM software for real-time visibility across power, cooling, and compute.

- Explore a corporate PPA or green tariff to transition to renewable energy.

- Upgrade legacy CRAC units to variable-speed, demand-driven models.

Phase 3: Optimize and Innovate (Year 2 Onward)

- Implement AI-driven energy management for predictive cooling and workload scheduling.

- Expand liquid cooling coverage to all high-density zones.

- Pursue LEED or ISO 50001 certification to formalize your sustainability program.

- Publish annual PUE and carbon intensity data for customer and investor transparency.

- Evaluate modular expansion for future capacity to maintain efficiency at scale.

Aptly’s Energy Efficient Data Centers Design Expertise

Aptly Technology delivers energy efficient data centers design for AI-era loads—PUE-optimized, liquid-ready, renewable-integrated. Our managed ops cut energy 25%+ via AI scheduling + real-time DCIM.

Check our Enterprise Data Center Buildout Guide for more information.

Conclusion: Building for an Energy-Efficient Future

The pressure on data center operators to build and run more efficient facilities is only going to intensify. Global data center energy demand is on track to surpass 500 TWh in 2026, and with AI workloads accelerating that growth, every design decision matters more than it ever has before.

The good news is that the technology stack for sustainable data center solutions is mature and proven. From PUE optimization and liquid cooling to renewable energy integration and AI-driven power management, the tools exist to design a facility that is both high-performance and low-carbon. The organizations that invest in these capabilities now will find themselves with a durable competitive advantage as energy costs climb and sustainability requirements tighten.

Energy efficient data center design is no longer a premium feature. In 2026, it is the baseline expectation — and for the most forward-thinking operators, it is a genuine business strategy.

To discuss your secure deployment roadmap, contact Aptly.

See also: GPU Datacenter Buildout Services, AI Infrastructure Managed Services, Managed Data Center Services

Frequently Asked Questions (FAQ):

- What is a good PUE for a data center in 2026?

- A PUE of 1.2 or lower is considered excellent for most commercial facilities. Hyperscale operators and liquid-cooled AI clusters regularly achieve 1.04 to 1.1. The global average remains around 1.58, which means most facilities still have significant room to improve.

- What is the best cooling system for data centers running AI workloads?

- Direct liquid cooling (DLC) or immersion cooling are the best choices for AI and GPU-heavy workloads. These systems handle power densities of 100 kW per rack or more, far beyond what air cooling can manage, and deliver PUE improvements of up to 40% over traditional CRAC-based designs.

- How do hyperscale data centers improve energy efficiency?

- Hyperscalers combine several approaches: custom-designed, highly efficient servers; advanced airflow and liquid cooling; aggressive virtualization and workload consolidation; 100% renewable energy procurement via PPAs; and AI-driven infrastructure management platforms. The cumulative effect pushes PUE well below the industry average.

- What are sustainable data center solutions & design principles and how to build a green data center from scratch?

- Building a green data center from scratch starts with smart site selection, choosing locations with cooler climates, access to renewable energy grids, and low water stress, since geography alone can cut your baseline cooling costs by 20–30% before a single server is ever racked. The four core sustainable data center solutions and design principles are: sourcing low-carbon energy (ideally through Power Purchase Agreements or on-site solar and wind), designing for circularity by using modular and upgradeable hardware to slash e-waste, optimizing cooling with liquid or closed-loop waterless systems matched to local conditions, and deploying real-time energy monitoring tools to track PUE, WUE, and CUE from day one.

- On the infrastructure side, high-efficiency UPS systems targeting 98%+ efficiency, hot-aisle/cold-aisle containment, and server virtualization are non-negotiable baseline choices that collectively prevent 30–40% of energy from going to waste before you even consider renewable sources. Green building certifications like LEED or ISO 50001 should be built into the project scope from the design phase — not bolted on later — as they enforce accountability across energy performance, materials sourcing, and operational targets that keep the facility honest over its full lifecycle.

- Finally, sustainability KPIs including PUE (target below 1.2), Carbon Usage Effectiveness (CUE), and server utilization rates need to be tracked continuously, because a green data center is not a one-time build decision but an ongoing operational discipline where AI-driven monitoring and carbon-aware workload scheduling compound gains year over year.

- What technologies reduce data center carbon footprint most effectively?

- The biggest impact comes from renewable energy procurement (eliminating Scope 2 emissions), liquid cooling (cutting energy consumption by up to 40%), and AI-driven workload optimization. Supplementing these with battery storage, carbon-aware scheduling, and server virtualization creates a comprehensive low-carbon operating model.

- How to monitor energy efficiency in data centers?

- DCIM platforms aggregate PUE, temperature, power draw, and utilization data in real time across the full facility stack. Smart PDUs provide per-rack and per-server visibility. Many operators also track Water Usage Effectiveness (WUE) and Carbon Usage Effectiveness (CUE) alongside PUE to build a complete sustainability picture.

- What are the latest trends in data center sustainability?

- In 2026, data center sustainability has moved from aspiration to hard operational requirement.

- The biggest shift is the rapid mainstream adoption of liquid and direct-to-chip cooling, driven by AI’s extreme heat loads, with closed-loop systems now cutting energy use by up to 40% over traditional air cooling.

- Renewable energy sourcing has crossed the 58%-mark industry-wide, led by PPAs, nuclear deals, and hybrid solar-gas setups.

- Regulators are stepping up too: the EU’s revised Energy Efficiency Directive now mandates PUE and waste-heat reporting for any facility above 500 kW.

Table of content

- TL; DR

- Intro: Why Energy Efficient Data Centers Design Is Your 2026 Survival Strategy

- Understanding PUE: The North Star of Data Center Energy Efficiency

- Start With Site Selection: Geography Drives Efficiency

- Cooling Solutions for Data Centers: Choosing the Right System

- Data Center Power Management: Reducing Losses from Grid to Server

- Renewable Energy Integration: Powering Green Data Center Design

- Modular Data Centers: Building Efficiency in From Day One

- Gaining Efficiency with AI Workloads Energy Optimization

- Green Certifications and Sustainability Standards

- How to Reduce Power Consumption in Data Centers: A Practical Framework

- Aptly’s Energy Efficient Data Centers Design Expertise

- Conclusion: Building for an Energy-Efficient Future

- Frequently Asked Questions (FAQ):