Introduction

AI is moving fast. According to IBM’s 2024 Global AI Adoption Index, 42% of enterprise-scale companies report actively deploying AI in their businesses. But as AI spreads across organizations, so does the attack surface. Every new model, data pipeline, and API endpoint is a potential entry point for attackers.

The risks are not theoretical. Gartner predicts that by 2027, over 40% of AI-related data breaches will be caused by improper use of generative AI across borders. Data leakage, model theft, adversarial inputs, and insecure APIs are not edge cases anymore — they are growing threats in production AI environments.

With traditional security models, you cannot handle the complexity of ML pipelines, training datasets, model artifacts, and inference endpoints. Protecting those requires a different kind of approach.

This guide explains what secure AI infrastructure actually means, how it is structured, and how you can build it step by step. Whether you manage on-premise systems, cloud environments, or a mix of both, you will find a practical path forward here.

What Is a Secure AI Infrastructure? (Architecture Overview)

A secure AI infrastructure is a multi-layered architecture that protects every stage of the AI lifecycle from data ingestion to model deployment and monitoring.

Here are the four key layers of enterprise AI security architecture:

1. Data Layer: This is where your raw and processed information resides. Security here is encryption at rest and in transit, strict access controls, and data lineage tracking so you know exactly where your data has been.

2. Model Layer: The algorithms and weights that represent your intellectual property. This layer protects model artifacts from theft, tampering, and unauthorized access. It also includes versioning, signing, and vulnerability testing against adversarial attacks.

3. MLOps/ Pipeline Layer: The “factory” that builds and updates your models. Securing this layer means hardening your automation scripts, managing secrets properly, and adding validation checkpoints before anything goes to production.

4. Deployment / Inference Layer: This is where models serve predictions. It includes API security, authentication, rate limiting, and runtime monitoring. A breach here can expose both your model and the sensitive data users send to it.

The table below highlights the differences between traditional IT security and AI-specific needs:

| Security Focus | Traditional IT | Secure AI Infrastructure |

|---|---|---|

| Primary Asset | Files and Databases | Models and Training Sets |

| Threat Type | Malware and Phishing | Data Poisoning and Model Theft |

| Access Control | User-based | Role-based + Data Lineage |

| Monitoring | Network Traffic | Model Drift and Adversarial Inputs |

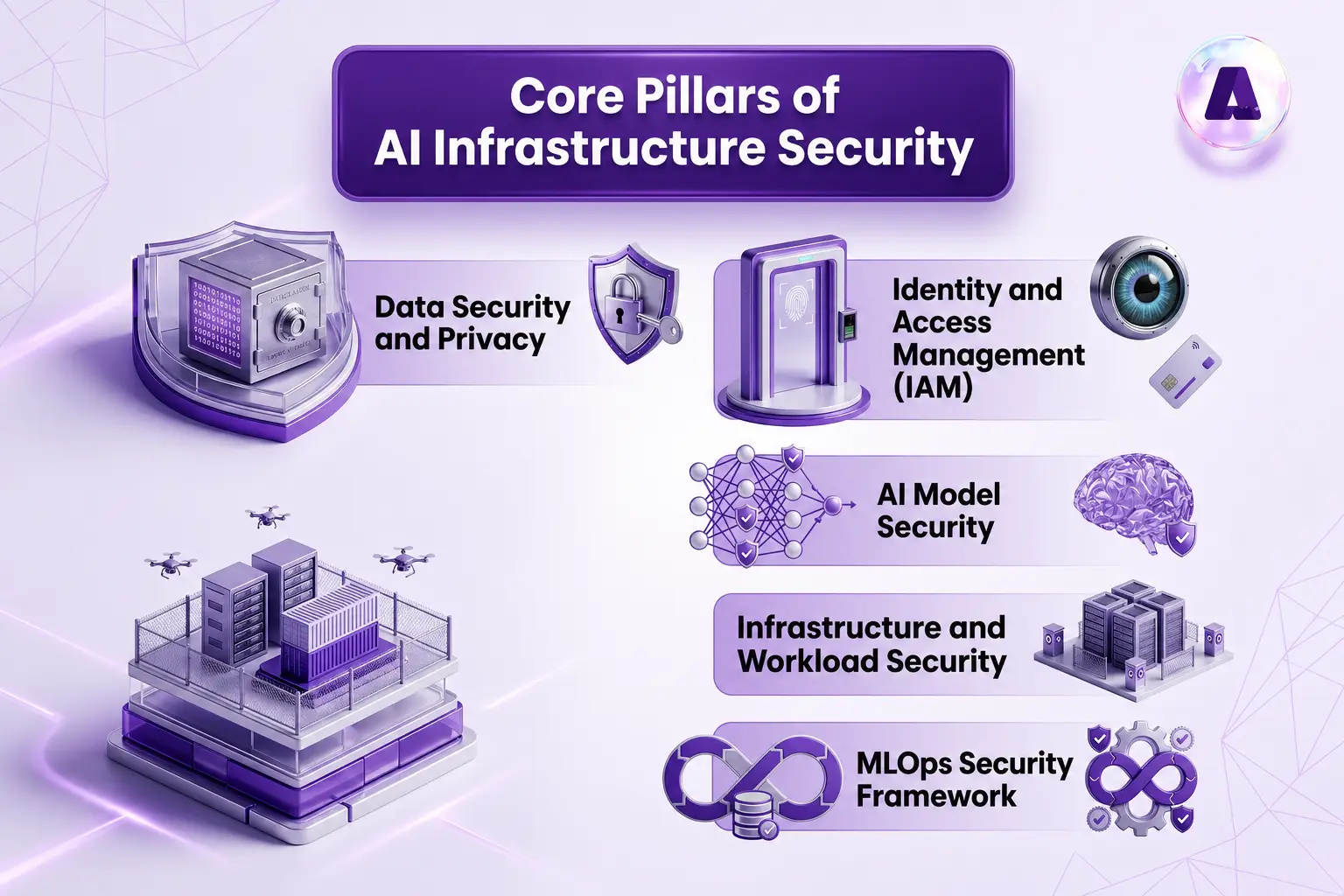

Core Pillars of AI Infrastructure Security

To build a secure AI infrastructure, you must focus on five foundational pillars. Weakness in any one of them creates a gap that attackers can exploit.

Data Security and Privacy

Data is the foundation of every AI system, which also makes it the most attractive target. IBM’s Cost of a Data Breach Report 2024 found that the global average cost of a data breach reached $4.88 million. For AI systems, the stakes are even higher because training data often contains sensitive personal or business-critical information.

Here is what solid data security looks like in practice:

- Encrypt data at rest (AES-256), in transit (TLS 1.3), and in use where possible using confidential computing

- Build secure data pipelines with end-to-end lineage tracking

- Apply AI data security best practices such as anonymization and masking to personally identifiable information (PII) before it enters training workflows

- Use differential privacy techniques to prevent training data leakage from model outputs

Identity and Access Management (IAM)

IAM is one of the most overlooked areas in AI security. Most breaches happen not because of sophisticated attacks, but because someone had access they should not have had.

- Apply role-based and attribute-based access controls to every data asset and model artifact

- Enforce the least-privilege principle

- Use Multi-Factor Authentication (MFA) and robust secrets management to prevent unauthorized users from hijacking your secure data pipelines.

- Rotate secrets and API keys regularly, and store them in a dedicated secrets manager

AI Model Security

Your model is a product of months of expensive work. Protecting it matters. Model inversion attacks allow attackers to reconstruct training data from a model’s outputs. Model extraction attacks let adversaries steal your model by querying it repeatedly.

- Store model artifacts in encrypted, access-controlled repositories

- Sign all model files so you can verify integrity before deployment

- Test models regularly against adversarial examples and known attack patterns

- Limit the detail in model outputs to reduce the risk of information leakage

Infrastructure and Workload Security

Most AI workloads run on containerized infrastructure, Kubernetes clusters, GPU nodes, and cloud-managed compute. Each of these introduces its own attack surface.

- Harden container images and scan them for vulnerabilities before deployment

- Use namespaces and network policies in Kubernetes to isolate AI workload security from other services

- Apply runtime protection to detect anomalous behavior inside containers

- Audit GPU access and monitor for unauthorized compute usage

MLOps Security Framework

ML pipelines are software pipelines. The same security principles that apply to DevSecOps apply here too, but with additional considerations specific to model training and deployment.

- Treat ML CI/CD pipelines as critical infrastructure and apply the same security standards you use for production software

- Validate model behavior at every stage

- Implement comprehensive audit logging so you can trace exactly what data trained which model and who approved the deployment

- Use automated compliance frameworks to flag policy violations before they reach production

Zero Trust AI Architecture: A Modern Approach

In a zero trust AI architecture, no system or user is trusted by default. Every request must be verified. Let’s check out the principles of zero trust AI architecture:

- Continuous Verification: Checking the identity of every microservice interacting with your model.

- Micro-segmentation: Dividing your AI environment into small zones to prevent lateral movement by attackers.

- Adaptive Access: Changing access levels based on the risk profile of the user or the device.

How to implement zero trust in AI systems:

- Enforce strict identity verification for every interaction

- Monitor behavior patterns across AI workflows

- Restrict lateral movement within systems

This approach reduces the risk of insider threats and unauthorized access.

Step-by-Step: How to Build a Secure AI Infrastructure for Enterprises

Building a secure system requires a structured process. Below is a practical approach you can follow.

Step 1: Assess Risks and Threat Models

Before you build anything, identify vulnerabilities in AI systems including insecure data pipelines, overpermissioned service accounts, unprotected model APIs, and insufficient logging.

- Document the vulnerabilities and rank them with potential business impact.

- Map out your data flows, model assets, and API endpoints.

- Identify which components handle the most sensitive data and which are most exposed to external access.

Building a threat model helps you prioritize which defenses to build first.

Step 2: Secure Data Pipelines

Once you know your risk surface, protect your pipelines from ingestion to storage

- Apply encryption to all data stores and transit paths.

- Set up access controls so only authorized systems and people can read training data.

- Implement data lineage tracking from the start

- Validate and sanitize all data inputs before they enter training pipelines.

This is crucial for how to protect AI pipelines from data breaches.

Step 3: Build Secure Model Development Workflows

Create isolated environments for training and testing.

- Developers should work in sandboxed environments that cannot directly access production data or models.

- Use version control for model artifacts and sign every artifact so you can verify it has not been tampered with.

- Enable audit logging

- Build model governance into your workflow from the start.

- Create model cards that document training data, intended use, known limitations, and security considerations for every model that moves toward production.

Step 4: Harden Deployment Environments

Your deployment layer is often the most exposed.

- Secure every API and endpoint that exposes your models.

- Use API gateways with authentication, rate limiting, and input validation.

- Protect inference systems behind network policies that restrict which services can call them.

- Apply the same hardening standards to your deployment infrastructure that you use for production web services

Step 5: Implement Continuous Monitoring and Threat Detection

You need ongoing monitoring of model behavior, API usage, data access patterns, and infrastructure health.

- Set up alerts for anomalies like unusual query volumes, unexpected data access, or model output patterns that deviate from baselines.

- Track AI-specific threat signals including repeated querying of the same model endpoint at scale, unusual inference latency spikes, and unexpected changes in model output distributions.

Platforms like Aptly Tech help teams centralize monitoring, enforce governance policies, and manage secure AI deployment across cloud environments. This reduces the operational burden of running these controls at scale.

Step 6: Ensure Governance and Compliance

Compliance is essential for enterprise AI systems.

- Align your infrastructure with AI security standards like GDPR, SOC 2, HIPAA.

- Build compliance checks into your pipelines so they run automatically.

- Leverage automated policy enforcement catches violations before they become regulatory problems.

Quick-Start Security Checklist for AI Teams

Use this checklist to audit your current secure AI infrastructure and identify immediate gaps.

Foundation

- Encrypt all data at rest and in transit

- Implement IAM with least-privilege access across all systems

- Enable comprehensive audit logging from day one

Pipeline

- Harden CI/CD pipelines with proper secrets management

- Validate and sanitize all training data inputs

- Sign and version all model artifacts

Governance

- Define a regulatory compliance matrix relevant to your industry

- Create model cards for every model moving to production

- Conduct quarterly AI risk assessments

Operations

- Deploy real-time inference monitoring with anomaly alerts

- Build and test an AI-specific incident response playbook

- Schedule adversarial red-team exercises on production models

Securing AI in Multi-Cloud and Hybrid Environments

Many organizations don’t stick to just one cloud. You might train on AWS but run inference on Azure. Managing AI infrastructure secure networking and software solutions across these environments is challenging. To address distributed systems, consider the following challenges.

| Key Challenges | Solutions |

|---|---|

| Inconsistent security policies across clouds | Use unified policy enforcement |

| Increased attack surface | Implement secure networking controls |

| Complex networking configurations | Apply software-defined security layers |

By applying these solutions, you can ensure that your AI workload security remains tight even when data moves between platforms.

What Are Common Vulnerabilities in AI Systems (and How to Fix Them)

Knowing what can go wrong is the first step toward preventing it. Here are the most common vulnerabilities found in production AI systems:

Data Poisoning

An attacker introduces malicious or misleading data into your training pipeline. The model learns incorrect patterns, leading to degraded performance or biased outputs.

Fix:

- Validate and sanitize all data inputs

- Track data provenance

- Monitor model behavior for unexpected drift after training

Model Inversion and Extraction

Repeated queries to a model API can let an attacker reconstruct training data or create a functional copy of your model.

Fix:

- Rate limit your APIs

- Monitor for high-volume repetitive queries

- Limit the detail in model outputs where possible

API Exploitation

Insecure API endpoints are the most common entry point for attacks on AI systems. Missing authentication, insufficient rate limiting, and inadequate input validation all create risk.

Fix:

- Apply authentication

- Rate limit your APIs

- Validate the inputs

- Test security regularly

Prompt Injection (LLMs)

In large language model applications, attackers embed instructions in user inputs that override your intended system prompts. This can cause models to leak confidential information or perform unintended actions.

Fix:

- Implement input filtering

- Use system-level guardrails

- Monitor outputs for policy violations

Weak Access Controls

Overpermissioned service accounts and shared credentials are among the most common causes of AI data breaches.

Fix:

- Audit all service account permissions quarterly

- Enforce least privilege

- Rotate credentials regularly

Tools, Platforms, and AI Security Companies

You don’t have to build everything from scratch. There are many ai security companies and tools available to help.

Data Security Tools

These handle encryption, tokenization, data masking, and secure data pipeline management.

Examples include cloud-native key management services (AWS KMS, Azure Key Vault) and purpose-built data security platforms.

Model Monitoring Platforms

These track model behavior in production, detect drift and anomalies, and alert teams to unexpected outputs. They are essential for ongoing AI workload security after deployment.

Cloud-Native Security Tools

Cloud providers offer native security services for identity management, network isolation, logging, and threat detection. These form the baseline for most cloud AI security architectures.

Many organizations rely on AI security companies like Aptly Tech to manage security at scale. These platforms help integrate MLOps security with governance to protect your AI workloads across any cloud.

Best Practices for Secure AI Infrastructure

To summarize, here are the non-negotiables for a secure machine learning infrastructure:

- Encrypt Everything: Don’t leave any data “naked,” even inside your private network.

- Adopt Zero Trust: Never trust a request by default.

- Automate Compliance: Use software to check for compliance gaps every day, not once a year.

- Test Regularly: Run “red team” exercises where you try to hack your own AI.

Securing Generative AI and LLM Applications

Generative AI introduces a new category of security challenges. Unlike traditional ML models, LLMs process open-ended natural language inputs, which creates risk vectors that do not exist in conventional systems.

Key Risks in Generative AI

- Prompt Injection: malicious instructions embedded in user inputs

- Data Leakage: models revealing information from their training data or system prompts

- Unsafe Outputs: models generating harmful, misleading, or policy-violating content

How to Secure LLM Applications in Enterprise Environments

- Implement input validation and filtering before prompts reach the model

- Use output filtering to catch policy violations before responses are returned to users

- Apply system-level guardrails that cannot be overridden by user inputs

- Monitor all interactions and maintain logs for audit and incident response purposes

- Restrict what external systems the LLM can access or call

Conclusion

A secure AI infrastructure requires attention across data, models, pipelines, and deployment environments. You cannot treat security as an add-on once systems are live. It must be part of your design from the start.

By applying zero trust principles, strengthening IAM, and monitoring systems continuously, you reduce risks significantly. You also improve compliance readiness and operational reliability.

Whether you build internally or use platforms like Aptly Tech, adopting a security-first mindset ensures your AI systems remain scalable and protected over time.

Don’t let security vulnerabilities stall your AI initiatives.

If you are looking to scale a secure AI infrastructure, contact us today to start your journey toward a more resilient AI future.

FAQs

Q: How do enterprises secure AI infrastructure?

Enterprises secure their systems by implementing a layered defense strategy. This includes data encryption, strict IAM, secure MLOps pipelines, and continuous monitoring of model behavior.

Q: How to secure data used in AI training?

You can secure training data by using data anonymization, encryption at rest and in transit, and strict access controls. It is also important to use data lineage tools to track the source and movement of all datasets.

Q: What does a secure AI architecture look like?

A secure architecture is composed of protected layers: a secure data lake, a hardened model development environment, protected CI/CD pipelines, and secure API endpoints for model inference.

Q: What are the top risks in AI infrastructure?

The most common risks include data breaches, model theft (IP theft), data poisoning during training, and adversarial attacks that cause models to produce incorrect or harmful outputs.

Q: What is zero trust AI infrastructure?

It is a security model where no user or system is trusted by default. Every access request to a model or dataset must be authenticated, authorized, and continuously validated.

Q: How to secure generative AI applications?

To secure GenAI, you should implement prompt filtering to prevent injections, use output guardrails to block sensitive data leakage, and ensure all API calls are authenticated and logged.

Table of content

- TL;DR

- Introduction

- What Is a Secure AI Infrastructure? (Architecture Overview)

- Core Pillars of AI Infrastructure Security

- Zero Trust AI Architecture: A Modern Approach

- Step-by-Step: How to Build a Secure AI Infrastructure for Enterprises

- Quick-Start Security Checklist for AI Teams

- Securing AI in Multi-Cloud and Hybrid Environments

- What Are Common Vulnerabilities in AI Systems (and How to Fix Them)

- Tools, Platforms, and AI Security Companies

- Best Practices for Secure AI Infrastructure

- Securing Generative AI and LLM Applications

- Conclusion

- FAQs