Intro: The Real Risk Is Not the Model. It Is the Data Around It.

Most enterprise AI incidents do not start with a clever adversarial attack. They start with a misconfigured vector store that exposes customer records to any authenticated user, or a RAG pipeline that retrieves documents without checking who originally had access. Secure enterprise AI infrastructure is built to prevent exactly that, and the stakes are not theoretical.

ENISA’s 2024 AI Threat Landscape identifies data poisoning, model inversion, and inference-time data leakage as the three highest-impact risk categories for enterprise AI. All three are architecture problems. This guide covers the architecture, the data pipeline controls, the governance model, and the checklist your team can use to close gaps today.

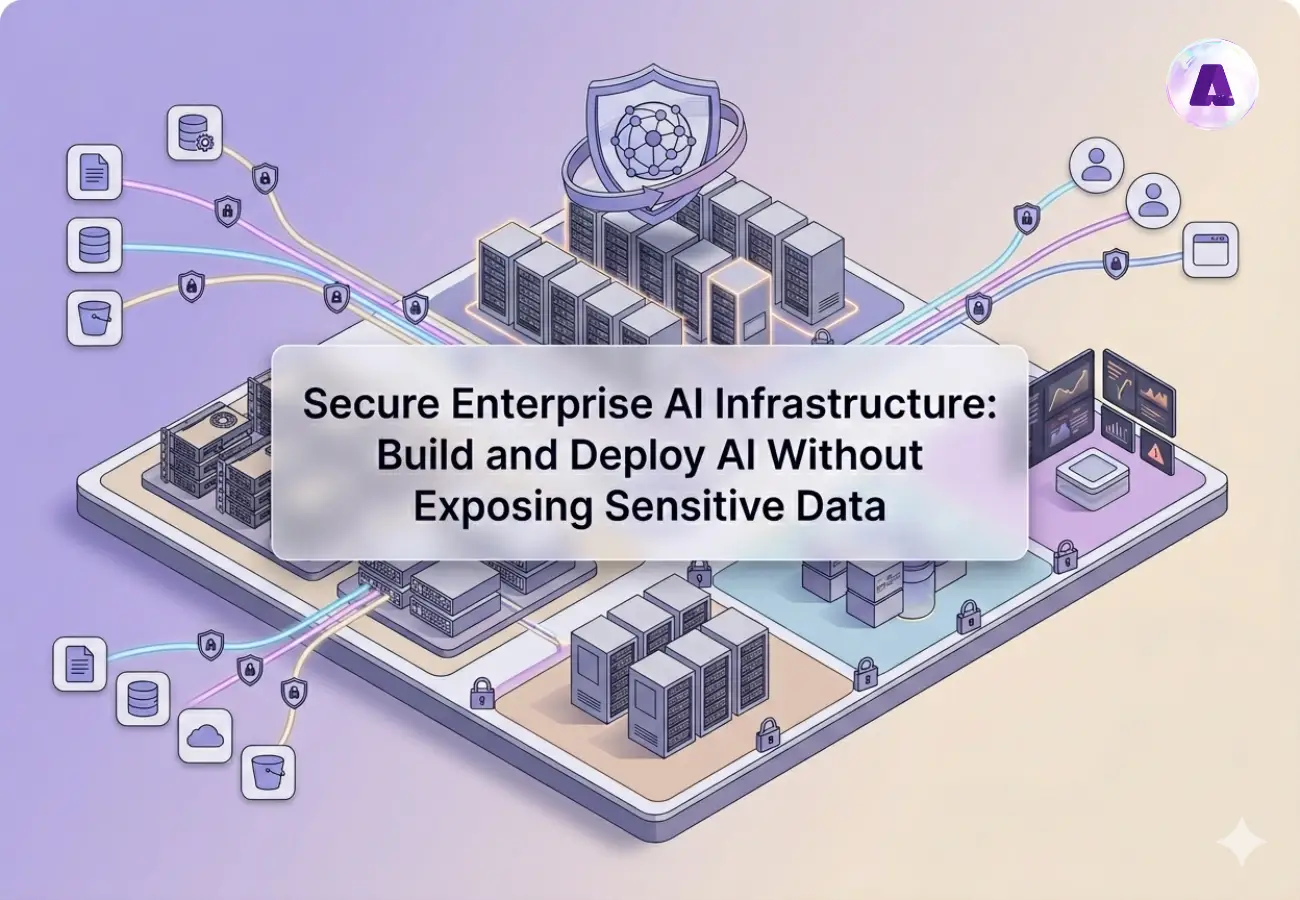

What Is Secure Enterprise AI Infrastructure?

Secure enterprise AI infrastructure is the combination of network isolation, compute segmentation, identity controls, data protection patterns, and governance processes that allow an organization to run AI workloads on sensitive data without uncontrolled exposure.

It differs from general cloud security because AI systems process and sometimes memorize data in ways traditional applications do not. An LLM fine-tuned on internal documents can surface fragments of those documents in outputs, even when the source files are not directly accessible. That is why enterprise AI data privacy solutions require controls at the data pipeline layer, not just at the API gateway. The core components: hardened network architecture, strong identity management, data encryption, model governance with audit trails, and continuous threat detection.

Check our in-depth guide on Data Center Security Best practices.

What Are the Risks of Enterprise AI Infrastructure?

The AI Attack Surface is broader than most organizations’ model before deployment. Three categories need immediate attention.

How to Prevent Data Leaks in AI Pipelines

RAG pipelines retrieve context from vector stores at inference time. If those retrieval calls do not enforce document-level access controls, users can access information that their permissions would otherwise block. The fix: enforce authorization at the retrieval layer, not just at the application layer. OWASP’s Top 10 for LLMs lists insecure output handling and excessive agency as leading pipeline vulnerabilities.

LLM Jacking, Prompt Injection, and Memorization

Prompt injection remains the number one LLM vulnerability in production deployments. In enterprise contexts, a crafted input can manipulate model behavior and in some cases extract data from connected retrieval systems. Beyond injection, research from Google DeepMind demonstrates that LLMs can reproduce verbatim sequences from training data under specific conditions, making data anonymization before ingestion a hard requirement, not an option.

How to Manage AI Security in Hybrid Cloud Environments

The hybrid cloud AI footprint multiplies the attack surface: on-premises GPU clusters, cloud-based inference endpoints, and cross-region vector stores all need consistent identity and network controls. Misconfigurations that would be caught immediately in a physical facility frequently slip through in cloud deployments because configuration management processes have not been extended to cover AI-specific services.

Designing Enterprise AI Security Architecture

Best Practices for Enterprise AI Security Architecture

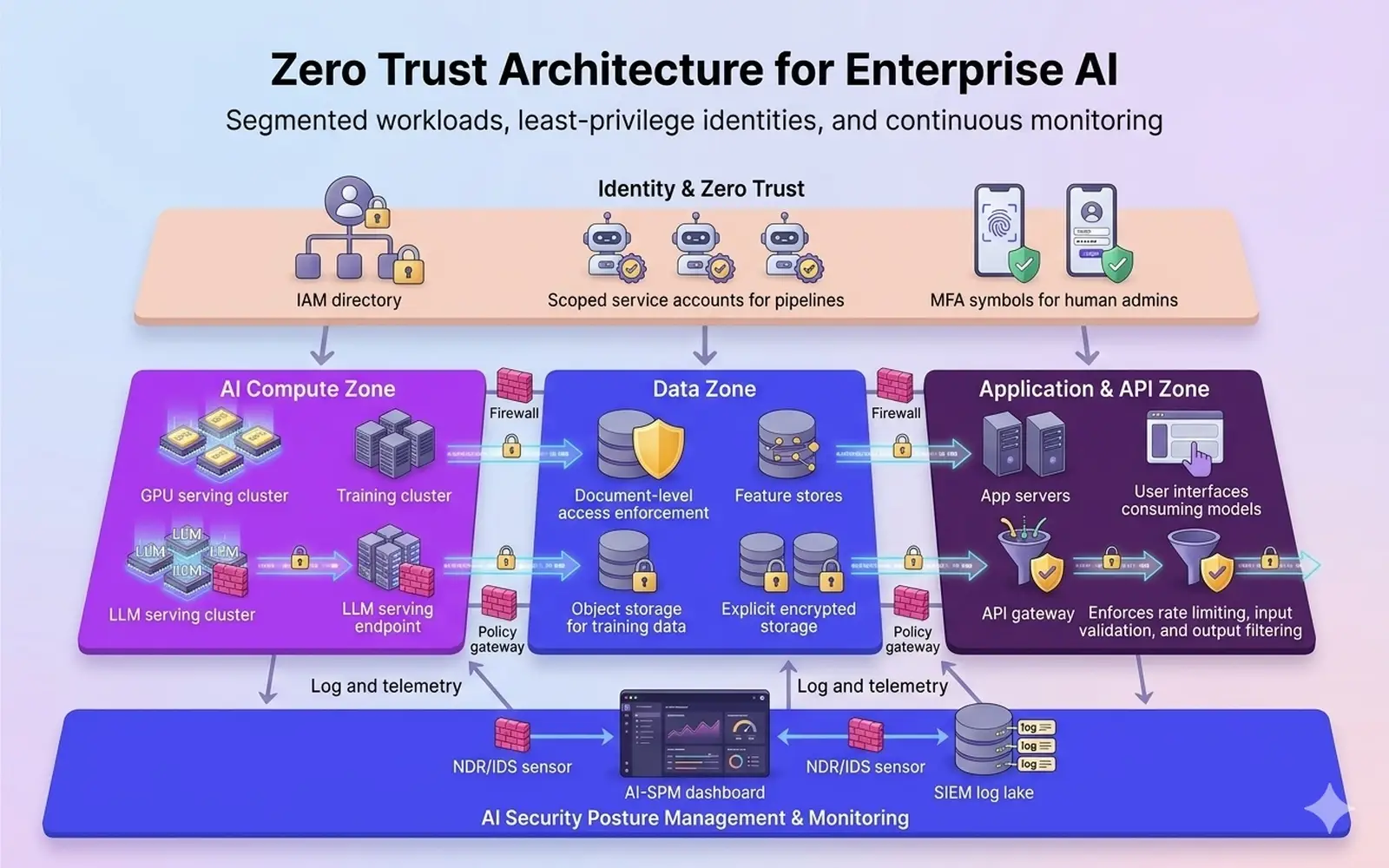

AI infrastructure security best practices start with network segmentation: GPU inference clusters, vector databases, and model serving endpoints each belong in dedicated VPCs with explicit firewall rules at every boundary. Shared network segments between AI workloads and general production traffic is one of the most common configuration errors in early-stage secure enterprise AI infrastructure deployments.

How to Implement Zero Trust in AI Infrastructure

Zero trust for enterprise AI means no workload or user is trusted by default. Every service account used by an AI pipeline needs a scoped identity with least-privilege permissions, automatic rotation, and request-level logging. NIST SP 800-207 is the reference architecture. Applied to AI: all inter-service communication must be authenticated; privileged sessions must be recorded; and network policy defaults to deny-all unless explicitly permitted.

For Secure GenAI Deployment, model endpoints need API gateway controls including rate limiting, input validation, and output filtering that checks responses for potential data leakage before returning them.

In practice, implementing this level of segmentation and zero trust across hybrid AI environments requires deep infrastructure and operational expertise—this is where Aptly Technology Corporation works closely with enterprises to design and operationalize secure AI architectures.

Protecting Sensitive Data in AI Pipelines

How to Ensure Data Privacy in Enterprise AI

At ingestion: apply data anonymization and tokenization before data reaches any AI training pipeline. Differential privacy adds calibrated noise to datasets so statistical properties useful for training are preserved without individual records being recoverable. Apple’s differential privacy research illustrates what this looks like in production at scale.

Secure AI Infrastructure for Healthcare and Finance

For organizations operating under HIPAA, PCI DSS, or financial services model risk management guidance, secure enterprise AI infrastructure must satisfy jurisdiction-specific data residency and sovereignty requirements. Federated learning allows model training across distributed data sources without centralizing sensitive records. Each node trains locally and shares only model gradients, keeping patient or financial data within its regulated boundary.

How to Deploy AI Without Exposing Confidential Data

At inference time: implement prompt filtering to strip sensitive patterns from inputs before they reach the model, and output monitoring to flag responses containing unexpected data. For RAG pipelines, enforce document-level access controls at retrieval time. Private AI infrastructure enterprise deployments running in isolated VPCs or on-premises environments eliminate the cloud-based exposure surface entirely for the most sensitive workloads.

Secure AI Deployment and Operations in Production

How to Secure AI Models in Production Environments

Secure AI deployment enterprise requires treating model serving infrastructure with the same hardening discipline as any production database. Maintain versioned model registries with documented training data lineage and rollback capability. Every model promoted to a production endpoint needs an associated risk assessment, an approval record, and a named owner accountable for monitoring its outputs.

How to Secure LLMs in Enterprise Environments

AI Security Posture Management (AI-SPM) provides continuous visibility into exposed endpoints, abnormal inference patterns, model drift, and access anomalies. Extending your SIEM to ingest model serving logs, vector database access logs, and API gateway telemetry, with detection rules tuned for prompt injection signatures and bulk data extraction patterns, is the operational standard for secure machine learning infrastructure in 2025.

AI Governance, Compliance, and Risk Management

How to Build Compliant AI Infrastructure for Enterprises

Map AI systems to compliance frameworks at design time. ISO/IEC 27001 covers the information security baseline. NIST’s AI Risk Management Framework provides AI-specific governance structure. AI risk registers should capture: what data the system accesses, what decisions it influences, its failure modes and business impact, and the controls in place for each. Review these quarterly; every retraining cycle is a risk event that should trigger reassessment.

Enterprise AI governance also means defining clear policies for model usage, data access, and human oversight before an incident forces the conversation. Accountability must be explicit, not assumed.

Enterprise AI Security Checklist for CIOs

What are the key components of a secure enterprise AI infrastructure? The table below maps the answer to a practical status check.

| Area | Control | Maturity Benchmark |

|---|---|---|

| Architecture | Network segmentation for AI workloads | Dedicated VPC; GPU clusters isolated from general prod |

| Architecture | Zero trust identity for all AI services | Scoped service accounts; MFA; full request logging |

| Data Protection | Encryption at rest and in transit | AES-256 at rest; TLS 1.3 in transit; HSM-managed keys |

| Data Protection | Anonymization and tokenization at ingestion | PII stripped or tokenized before training pipeline entry |

| Model Security | Secure APIs with input and output filtering | Prompt filtering; output redaction for sensitive patterns |

| Model Security | Versioned model governance and rollback | Registry with lineage docs; rollback tested annually |

| Operations | AI-SPM and SIEM with AI workload logs | Detection rules for injection and bulk extraction patterns |

| Governance | Compliance framework mapping | ISO 27001 or SOC 2 current; NIST AI RMF applied |

| Governance | AI risk register with quarterly review | Each system has an owner, failure mode catalog, remediation log |

Conclusion: Secure Enterprise AI Is a Program, not a One-Time Deployment

Secure enterprise AI infrastructure is not a configuration applied once at launch. Models get retrained. Data sources change. Retrieval pipelines expand. Each change is a new risk event that the organizations that get this right treat as a trigger for reassessment.

The four pillars apply consistently: hardened architecture with zero trust controls, data protection at every pipeline stage, model governance with audit trails, and AI-SPM for ongoing visibility. Map your environment against the checklist above, identify the gaps with the highest blast radius, and address those first.

Enterprise AI governance and zero trust AI architecture are not future-state aspirations. They are the baseline for running AI on data that matters. Aptly Technology operates secure enterprise AI infrastructure for enterprises and hyperscale environments where security and uptime are simultaneously non-negotiable. To discuss your secure deployment roadmap, contact Aptly.

See also: GPU Datacenter Buildout Services, AI Infrastructure Managed Services, Managed Data Center Services

Frequently Asked Questions (FAQ):

- What is secure enterprise AI infrastructure?

- Secure enterprise AI infrastructure is the combination of network isolation, identity controls, data protection patterns, and governance processes that allows an organization to run AI workloads on sensitive data without uncontrolled exposure. It differs from general cloud security because AI models can memorize and inadvertently surface training data, and retrieval pipelines can bypass access controls if not explicitly designed to respect them.

- How to build a secure enterprise AI infrastructure?

- Start with a threat model: identify the data your AI system will access, the users and systems that interact with it, and the failure modes with the highest impact. Build inside a dedicated network segment with zero trust identity controls and document-level access enforcement on every retrieval layer. Ship governance policies alongside the architecture, not after deployment.

- How do enterprises secure AI infrastructure?

- Enterprises securing AI infrastructure combine network segmentation, zero trust architecture, least-privilege IAM, and continuous monitoring through AI-SPM platforms. The most effective programs extend existing SIEM and configuration management processes to cover AI-specific services rather than treating secure enterprise AI infrastructure as a separate security program.

- How to prevent data leakage in AI models?

- Apply data anonymization and tokenization before any sensitive data enters a training pipeline. At inference time, use prompt filtering and output monitoring to catch sensitive patterns in both inputs and responses. For RAG deployments, enforce document-level authorization at the retrieval layer. Research confirms that models can memorize and reproduce training data verbatim, making ingestion-time controls non-negotiable.

- What is zero trust AI architecture?

- Zero trust AI architecture applies the principle of verify-everything to AI workloads: every service account has scoped, least-privilege permissions; every inter-service call is authenticated; privileged sessions are recorded; and network policy defaults to deny-all. NIST SP 800-207 is the reference framework. In practice, this means no AI workload or user is trusted by virtue of being inside the network perimeter.

- How to protect enterprise data in AI pipelines?

- Encrypt data in transit with TLS 1.3 and at rest with AES-256 using externally managed keys. Tokenize or anonymize PII before pipeline ingestion. Enforce document-level access controls at retrieval time in RAG architectures. Monitor model outputs for data leakage patterns using output filtering rules calibrated to your data classification policy.

- What is the safest way to deploy AI in a company?

- Deploy inside a dedicated VPC or on-premises isolated environment with zero trust controls before expanding access. Use a private AI infrastructure enterprise model initially, where the system has no external connectivity, and expand the surface only after a documented threat model and access policy have been approved. Skipping architecture to move faster consistently backfires.

- Can AI models leak confidential data?

- Published research demonstrates LLMs can reproduce verbatim sequences from training data under specific prompting conditions. RAG pipelines without retrieval-layer authorization can return content the user was never permitted to see. Data anonymization at ingestion and strict retrieval-layer access controls are the primary mitigations.

- How to build private AI infrastructure?

- Private AI infrastructure enterprise deployments run in isolated VPCs or fully on-premises environments with no external model endpoints. Key requirements: dedicated GPU infrastructure in a segmented network zone, on-premises vector stores with access controls matching your existing IAM, locally hosted LLMs, and all logging retained inside your security perimeter.

- How do I build a compliant AI system?

- Map AI systems to your applicable compliance frameworks at design time. For most enterprises: ISO 27001 for the information security baseline and the NIST AI Risk Management Framework for AI-specific governance. Document training data lineage, access controls, and decision logic. Assign a system owner accountable for periodic risk reassessment whenever the model is retrained, fine-tuned, or connected to a new data source.

Table of content

- TL; DR

- Intro: The Real Risk Is Not the Model. It Is the Data Around It.

- What Is Secure Enterprise AI Infrastructure?

- What Are the Risks of Enterprise AI Infrastructure?

- Designing Enterprise AI Security Architecture

- Protecting Sensitive Data in AI Pipelines

- Secure AI Deployment and Operations in Production

- AI Governance, Compliance, and Risk Management

- Enterprise AI Security Checklist for CIOs

- Conclusion: Secure Enterprise AI Is a Program, not a One-Time Deployment

- Frequently Asked Questions (FAQ):