Introduction: Why Data Center Lifecycle Management Has Become a Board Level Priority

Most enterprises don’t fail at building data centers; they fail at managing the lifecycle that follows. The build phase is well understood where budgets get approved, vendors get selected, and hardware gets racked. What breaks down is everything after. Refresh cycles drift. Capacity planning falls behind demand. AI workloads land on infrastructure never designed for them. The gap isn’t in construction; it’s in the discipline that comes after that.

Deloitte projects that by 2035, power demand from AI data centers in the United States could grow more than thirtyfold, from 4 GW in 2024 to 123 GW. That is not an incremental trend. That is a different industry, building itself on top of the one that exists today.

Most enterprises are not fully ready for this. Refresh cycles still get triggered by failures rather than planning. Capacity planning lives in redundant spreadsheets. GPU infrastructure planning for AI datacenters gets bolted on late, after the architecture is already locked in. The result is predictable: overprovisioned hardware sitting idle next to under provisioned environments bottlenecking AI workloads, and modernization projects running over time and budget.

A disciplined enterprise data center lifecycle management framework is what closes that gap, with defined stages, clear ownership, and measurable outcomes at every phase.

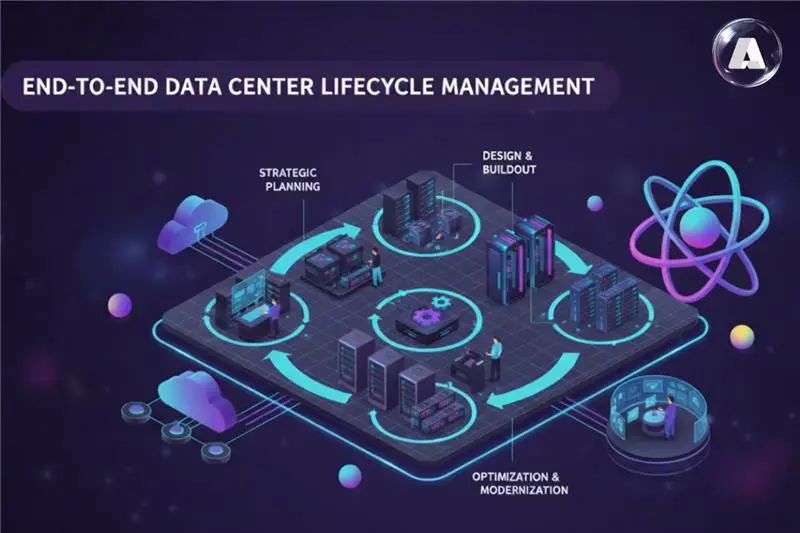

What is Data Center Lifecycle Management (DCLM)?

Data center lifecycle management (DCLM) is the end-to-end discipline of governing infrastructure assets, from data center planning and design through deployment, operations, optimization, and eventual decommissioning, in a structured, continuous cycle.

Think of it as the operating system beneath your infrastructure decisions. It answers four questions every enterprise IT leader face:

- What do we build, and when? Demand forecasting, site selection, capacity planning.

- How do we run it without bleeding money? Operations, automation, and observability.

- When do we upgrade or retire it? Optimization, modernization, EOL

- How do we fund the full cycle? TCO modeling, FinOps integration, asset recovery.

For enterprise DCLM, this matters more than ever because the assets being managed today bear little resemblance to simple server racks. GPU clusters, hybrid cloud data center architecture interconnects, software defined networking, and liquid cooled high-density pods all carry different performance profiles, obsolescence curves, and cost drivers. It’s in dire need of singularity of control/management.

Stage 1: Strategic Planning and Data Center Capacity

Every successful data center lifecycle begins well before a single server is racked. Strategic planning is where data center capacity planning, technology road mapping, and financial modelling converge into a clear picture of what you need, when you need it, and what it will cost. Skipping this stage is the most expensive mistake in enterprise DCLM.

This stage covers:

- Demand forecasting: How much compute, storage, and network will you need in 12, 24, and 48 months? For AI intensive organizations, this includes GPU cluster infrastructure projections, which can shift dramatically depending on model scale and inference volume.

- Site selection and power planning: Gartner predicts that by 2027, 75% of enterprises will restructure risk and security strategies due to AI infrastructure demands. Site decisions increasingly factor in power availability, renewable energy access, and proximity to talent.

- Hybrid cloud data center architecture decisions: Is workload X better served on premises, in collocation, or in cloud? Data center lifecycle management maps this by workload class, so the answer is not relitigating every budget cycle.

- Budget modeling: Capital vs. operating expenditure tradeoffs, refresh cadences, and total cost of ownership (TCO) over 5–7-year horizons.

Practitioners who shortchange this stage end up with infrastructure that is right sized for today and wrong sized for tomorrow, the costliest outcome in any data center infrastructure lifecycle.

Stage 2: Data Center Planning & Design + Buildout Strategy

Once strategy is defined, data center planning and design translate business requirements into physical and logical architecture. This is where data center buildout strategy meets engineering reality, and where decisions made quickly tend to cause problems slowly.

Key design decisions at this stage:

- Power and cooling architecture: AI optimized facilities increasingly require liquid cooling, direct to chip systems, or immersion cooling. A single H100 GPU can draw 700W; a full rack of eight can exceed 10kW, thermal demands conventional data centers were not built for.

- Network fabric: InfiniBand vs. Ethernet for GPU cluster interconnects, a decision that shapes latency, bandwidth, and scalability for years. See our deep dive on AI networking decisions for more context.

- Scalable data center architecture: Modular, pod-based designs that allow incremental expansion without full facility disruption are the preferred pattern in a mature data center lifecycle management approach, replacing monolithic builds.

- NVIDIA DGX Ready Lifecycle Management and validated reference architectures: Pre validated, vendor certified rack designs reduce deployment risk and accelerate production time. NVIDIA offers DGX Lifecycle Management specifically to address technology obsolescence in GPU infrastructure.

Data center deployment strategy at this stage should explicitly include acceptance testing criteria, burn in procedures, and baseline performance benchmarks, so teams have a reference point throughout the operational data center lifecycle that follows.

Stage 3: Data Center Operations Management & Observability

Once infrastructure is deployed, data center operations management takes over. This is the longest running phase of the data center lifecycle and, in most organizations, the least mature. It is also where the cost of ignoring data center lifecycle management discipline shows up most clearly: as unplanned downtime, runaway support costs, and infrastructure that cannot be trusted at scale.

Effective operations in an enterprise data center lifecycle management context require:

- Infrastructure Observability: Full stack telemetry across compute (CPU/GPU utilization, thermal metrics, error rates), storage (IOPS, latency, capacity), and network (bandwidth, packet loss, BGP health). Without this, infrastructure decisions are blind guesswork.

- Infrastructure Reliability Engineering: Proactive monitoring, SLO definition, and runbook driven response, not reactive firefighting triggered by user complaints or production alerts.

- Infrastructure Automation: Automated provisioning, configuration management (Ansible, Terraform), and self-healing capabilities reduce mean time to recovery (MTTR) and free engineering capacity for higher value work.

- Infrastructure Capacity Management: Continuous comparison of actual vs. planned utilization, with automated alerts when headroom drops below defined thresholds.

Organizations with mature data center operations management embedded in their data center lifecycle management framework report 30-40% reductions in unplanned downtime, per Maintech lifecycle frameworks. That has a meaningful impact on SLA performance and AI workload continuity.

Stage 4: Data Center Optimization & Enterprise Data Center Modernization

Mid-cycle optimization is where the most value is created in the data center lifecycle management process, and where the most money gets left on the table. Enterprise data center modernization is not a one-time project. It is an ongoing discipline built into the data center lifecycle strategy, not bolted on when something breaks or a budget cycle opens.

Key levers at this stage:

- Workload Consolidation and Right Sizing: VMs and containers accumulate technical debt, overallocated resources sitting underused. Regular rightsizing reviews can reclaim 20-30% of compute and storage capacity, per industry estimates.

- Data Center Migration and Modernization: End of life servers, EOL networking hardware, and obsolete storage arrays increase operational risk and cost. Structured migration programs, whether to newer on prem hardware or cloud, reduce this exposure systematically.

- Power and Cooling Optimization: In dense AI facilities, PUE optimization and liquid cooling retrofits can cut energy costs by while enabling higher compute density.

- Infrastructure Modernization for AI: Legacy infrastructure built for general purpose compute was not designed for GPU cluster infrastructure, high bandwidth storage fabrics, or the low latency networking AI workloads demand. A structured enterprise infrastructure modernization plan, integrated into the data center lifecycle management framework from the start, is the only way to close this gap without a full rebuild.

For guidance on optimizing infrastructure specifically for AI workloads, see: Best Ways to Optimize Infrastructure for AI Workloads.

Stage 5: Decommissioning, Asset Disposition, and Lifecycle Refresh

End of life planning is where many enterprises lose value and absorb risk they did not plan for. Hardware running past its useful life brings rising maintenance costs, vendor support gaps, security vulnerabilities, and performance degradation. A mature data center lifecycle management approach treats decommissioning as a first-class stage, not a cleanup task after everything else is already done.

A structured data center lifecycle management approach to decommissioning includes:

- Proactive EOL planning: Typically starting 12-18 months before vendor end of support dates, giving time for migration planning, procurement cycles, and staged cutover, not emergency procurement at end of life.

- Data sanitization and secure disposal: A non-negotiable step, especially for hardware holding regulated data. NIST 800 88 defines acceptable sanitization methods.

- Asset recovery and resale: Well-maintained hardware often retains around 15-30% of original value at EOL recoverable through certified IT asset disposition (ITAD) partners.

- Lifecycle refresh planning: Decommission feeds back into Stage 1, triggering a new data center lifecycle management planning cycle. Mature organizations run these cycles continuously, not episodically.

For GPU infrastructure, the refresh cadence is compressed. NVIDIA hardware generations (H100 to B200/Blackwell) deliver step change performance improvements on 2–3-year cycles, meaning GPU infrastructure planning for AI datacenters requires more frequent data center lifecycle reviews than general purpose compute.

Why GPUs Remain Idle in AI Workloads?

| Stage | Primary Focus | Key Activities | Common Pitfalls | Success Metrics |

|---|---|---|---|---|

| Strategic Planning | Capacity & budget alignment | Demand forecasting, site assessment, TCO modeling | Underestimating AI growth, ignoring power constraints | Accuracy of 24-month demand forecasts |

| Design & Buildout | Architecture & deployment | Power/cooling design, network fabric, vendor validation | Monolithic builds, skipping burn in testing | Time to production, burn in pass rate |

| Operations | Reliability & performance | Monitoring, automation, incident response | Reactive firefighting, observability gaps | Uptime SLA, MTTR, utilization rate |

| Optimization | Efficiency & modernization | Rightsizing, migration, PUE optimization | One-time projects vs. continuous discipline | Cost per workload, PUE, capacity reclaimed |

| Decommission | Risk reduction & value recovery | EOL planning, secure disposal, asset recovery | Late EOL trigger, compliance gaps | ITAD recovery value, zero compliance incidents |

Building AI Ready Data Center Infrastructure Within Your Lifecycle Framework

–

The biggest shift in data center lifecycle management over the past three years is that AI ready infrastructure is no longer a specialty consideration; it is the baseline. Organizations still treating it as an add on to their data center lifecycle strategy are already behind.

What makes infrastructure AI ready within a data center lifecycle management context:

- GPU Infrastructure Planning for AI Datacenters requires purpose-built data center lifecycle treatment: GPU density (TFLOPS per rack), memory bandwidth, and NVLink/InfiniBand interconnect topology cannot simply be grafted onto a general compute plan.

- Infrastructure Scalability has to be designed from the start. Scalable data center architecture using modular pod designs, software-defined networking, and programmatic provisioning is the only way to scale from 8 GPUs to 256 without a full rebuild.

- Cloud-native infrastructure and hybrid flexibility matter because not every AI workload belongs on-premises. A mature data center lifecycle management strategy maps burst training, experimentation, and variable-demand inference to the right infrastructure tier as requirements shift.

- Infrastructure resilience and N+1 or N+2 redundancy for power, cooling, and networking are non-negotiable in AI-critical zones. Training jobs run for days or weeks, and a single mid-run hardware failure wastes significant compute time and delays roadmaps.

For organizations just beginning this work, our guide on Achieving AI Readiness covers the foundational steps before infrastructure investment decisions are made. For evaluating whether legacy infrastructure can support AI, see: Modernizing Legacy Infrastructure: Where to Start in 2025.

Data Center Lifecycle Management Best Practices: What Mature Organizations Do Differently

Organizations that consistently extract value from their infrastructure share several practices, and none of them are accidents.

- Data center lifecycle management is owned continuously with dedicated teams, tooling, and KPIs; infrastructure is treated as a product, not a one-time project.

- NVIDIA Omniverse DSX and similar platforms let data center infrastructure lifecycle management teams simulate power, thermal, and networking changes before deployment, eliminating costly buildout rework.

- GPU cluster infrastructure runs on a 2–3-year refresh cycle driven by NVIDIA/AMD generations, while general compute follows 4-7-years, conflating the two shows up not in the plan, but in the bill.

- Unit economics, cost per GPU-hour, cost per petabyte, cost per workload — are tracked throughout the data center lifecycle, not just at procurement, driving year-over-year gains through data center optimization strategies.

- For major transitions, GPU datacenter buildouts, hybrid cloud migrations, or legacy modernization programs, external data center lifecycle management consulting expertise accelerates timelines and reduces risk where in-house GPU infrastructure planning depth is limited.

For multi-site infrastructure, see: How to Choose a Data Center Partner and Managed Data Center Services.

Conclusion: Your Data Center Lifecycle Is Your AI Roadmap

Data center lifecycle management is not a back-office IT function. It is where infrastructure investment either compounds or erodes. Aptly’s Global Data Center Operations (GDCO) model is built precisely for this.

As the only provider trusted by Microsoft to design, build, and support third-party hyperscale data centers, Aptly delivers end-to-end managed data center services, combining hands-on in-datacenter execution with a 24×7 Command Center for monitoring, incident management, and Tier 2/3 support. Services span data center infrastructure deployment, data center operations management, and full data center lifecycle management, structured across Core, Scale, and Optimize tiers so enterprises can start with stable operations and expand without rebuilding from scratch.

From GPU cluster infrastructure deployment and scalable data center architecture to infrastructure modernization, proactive data center capacity planning, and up to 99.9% uptime SLAs, Aptly manages the environment so your teams stay focused on the business.

If your data center lifecycle management strategy needs a reset, explore how Aptly can help at aptlytech.com.

Frequently Asked Questions (FAQ)

- What is data center lifecycle management?

- Data center lifecycle management (DCLM) is the discipline of governing infrastructure from capacity planning through decommissioning in a structured, continuous cycle ensuring every investment is aligned with business demand and retired at the right time.

- What are the main stages of the data center lifecycle?

- Five stages: (1) Strategic Planning, (2) Design & Buildout, (3) Operations & Observability, (4) Optimization & Modernization, and (5) Decommissioning & Refresh. Each feed into the next continuously, not sequentially.

- How often should data center infrastructure be refreshed?

- General compute: 4-7 years. Networking: 4 5 years. Storage: 5-7 years. GPU cluster infrastructure for AI: 2-3 years compressed by rapid NVIDIA/AMD hardware generation cycles. A mature data center lifecycle management strategy track these separately.

- How is data center lifecycle management different for AI workloads?

- AI racks run hotter (50 100kW vs. 5 10kW traditional), refresh faster, need liquid cooling, and demand purpose-built interconnects. Effective GPU infrastructure planning for AI datacenters must be integrated into data center lifecycle management from day one did not retrofit later.

- What role does hybrid cloud play in data center lifecycle strategy?

- Hybrid cloud data center architecture means lifecycle strategy now spans on prem, colocation, and cloud simultaneously. The key decision: which workloads belong where, and when do they move? A mature data center lifecycle management framework maps this explicitly and revisits it as requirements evolve.

- What is a data center lifecycle management framework?

- A structured set of policies, processes, and tools that govern infrastructure across its full lifecycles covering refresh timelines, capacity planning cadence, observability standards, migration criteria, ITAD procedures, and FinOps integration. Organizations with mature enterprise data center lifecycle management frameworks consistently outperform peers on uptime, cost efficiency, and infrastructure agility.

Table of content

- TL; DR:

- Introduction: Why Data Center Lifecycle Management Has Become a Board Level Priority

- What is Data Center Lifecycle Management (DCLM)?

- Stage 1: Strategic Planning and Data Center Capacity

- Stage 2: Data Center Planning & Design + Buildout Strategy

- Stage 3: Data Center Operations Management & Observability

- Stage 4: Data Center Optimization & Enterprise Data Center Modernization

- Stage 5: Decommissioning, Asset Disposition, and Lifecycle Refresh

- Why GPUs Remain Idle in AI Workloads?

- Building AI Ready Data Center Infrastructure Within Your Lifecycle Framework

- Data Center Lifecycle Management Best Practices: What Mature Organizations Do Differently

- Conclusion: Your Data Center Lifecycle Is Your AI Roadmap

- Frequently Asked Questions (FAQ)